We Suck at Spotting AI Faces: But Could This One Simple Trick Solve It?

AI has generated thousands of fake social media profile pictures, but there is one common thing that gives them away. We look at the techniques trying to minimise the spread of AI misinformation.

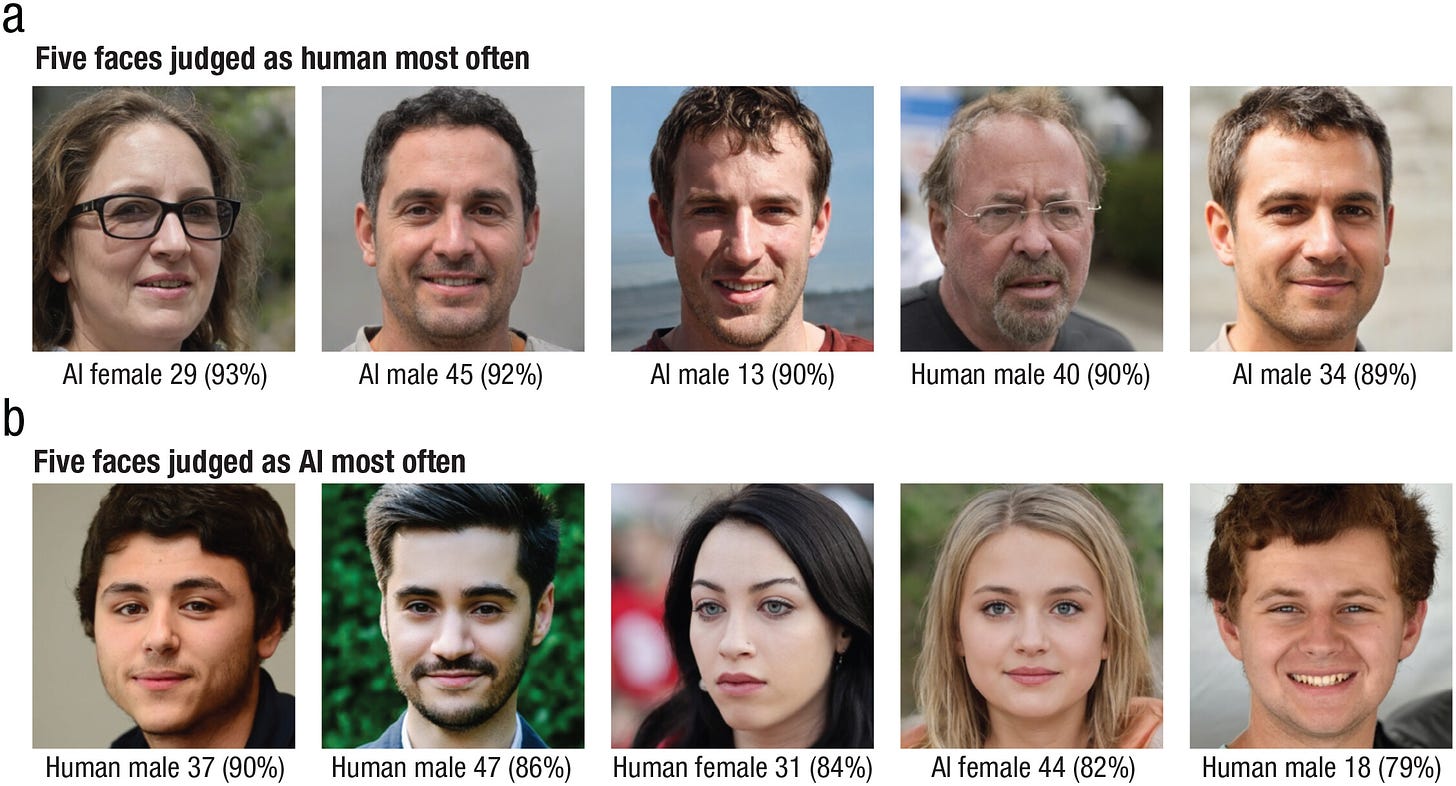

We are really bad at spotting a AI faces from human ones. In fact, researchers have found that people are more likely to judge an AI face to be human than an actual human face, especially if it is white.1 Just look at the faces below.

All the images above are AI generated, and come from a number of fake Twitter profiles used to add authenticity to the content they generate and share.

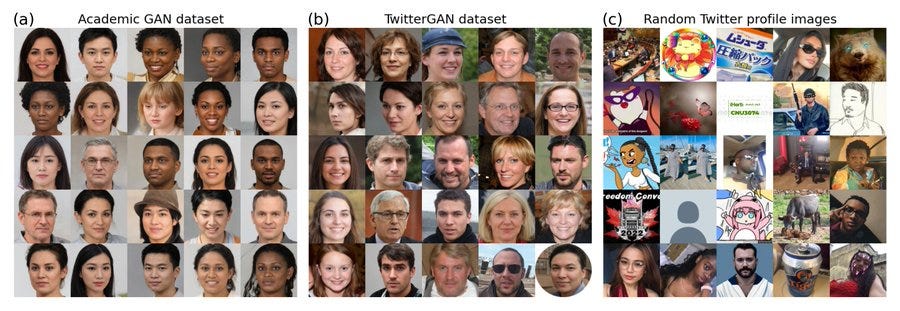

These images have all been generated through a type of machine learning known as a generative adversarial network (GAN) which is able to create incredibly lifelike replicas of human faces. But even though they look convincing on the outside, researchers of a new paper have used a simple trick to estimate what profile pictures are fake.2

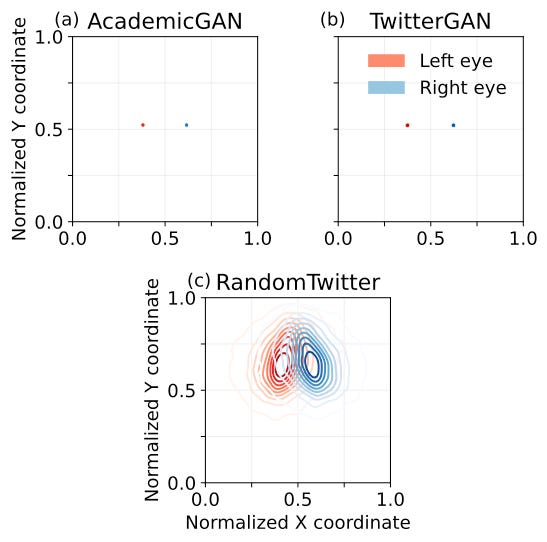

Look at all the GAN-generated images in the first two panels below. But they all have one specific thing in common. Can you spot it?

Researchers noted that the images always place the eyes in the same spot on the picture. Normally, Twitter profile pictures are varied, a little off centre, maybe turning to the side, or further away. Even though the GAN-generated images look different on the surface, their basic coding instructions places the eye in the same place every time.

You can test this for yourself on sites such as thispersondoesnotexist.com. Try blocking out part of your monitor to locate just the eyes. They never deviate very far from the one spot (see the video below). Researchers used this technique to estimate the number of fake twitter profiles that were generating, spamming and amplifying content. They judged it to be over 10,000 daily active users. This, coupled with text generated from ChatGPT adds to the worries about an AI enabled wave of misinformation.

Researchers and social media companies have been in a battle to detect and remove these fake profiles. As AI's capabilities increase, so to does the difficulty in detecting real profiles from fake ones. New strategies are constantly being developed.

The consistency and lack of variation in GAN-generated images I think shows that even though on top there is a layer of human like appearance, underneath is algorithm running the same process. I am reminded of the scenes in the Terminator when the skin is ripped off the face to reveal a metal face with burning red eye. Like the terminator these programs are putting a layer of familiar skin over a piece of machinery. By focussing on the eyes we see these images for what they really are, just mechanical programs.

It should be noted that false positives can creep in with this method as by statistical chance some real profile pictures have eyes in similar positions. But overall, this technique was accurate at predicting what profile images were likely to be faked when compared to what actual humans use as their profile picture.

Authors note that while this method works for some types of AI generated faces, technology is quickly evolving. Other more sophisticated generators do not show these traits. This means that new ways to detect fake faces will need to be looked at.

Deception by Design

It makes sense that these GAN-generated faces fool us, because that is what they have specifically been trained to do.

Training using generative adversarial networks (GAN) is a method that pits two networks against one another. The first network creates something to try and fool the second network. If it doesn't succeed it goes back and tries something else, learning all the time and improving.

We can think of one network like a forger (computer terms: generator) who is trying to fool an art critic (discriminator) into thinking that their painting is really a genuine masterpiece. At first the forger isn't very good, and the critic easily spots the fakes. But over time the forger learns from its errors and gets better and better, all the while the critic gets more adept at spotting fakes. If you leave this process go on and on, eventually what you end up with is forgeries that are so good that the critic cannot tell the difference between it and the real thing. GAN-generated images are ones that have already fooled a program designed to spot fakes. This process is how we have ended up with faces that are so realistic they can fool humans as well.

Video - an example of randomly generated images from thispersondoesnotexist.com. I have (very badly) superimposed an image to highlight how the eyes always stay in the one position.

Are we looking for the wrong things?

Multiple studies have found how bad we are at detecting fake faces from real ones. It turns out that when humans look at faces to tell if they are AI, we look at the wrong things. Another recent study asked participants to judge whether faces were AI or human, and then also asked them questions about the faces themselves. How familiar is the face? How symmetrical? How attractive?3

Of the five faces participants said were most likely to be human, four were AI. Of the five faces judged to be AI most often, only one was actually AI. Leaving four real humans being told they looked like AI.

Those who were tested focused on things like, how familiar the face looked or how it was proportioned. Faces were more likely to be judged as human if they were more proportional, alive in the eyes, familiar, attractive, smooth skinned and less memorable. Researchers dubbed this AI-hyperrealism. For example, if part of a face didn't fit with the rest, it would be more likely to be judged as AI. A face that was more However, the GAN process doesn't work like this. This method of creating AI necessarily finds the most average and unmemorable type of face.

GANs are less likely to present a face that is disproportioned or asymmetrical, unlike real human faces. GAN-generated faces are distinct in their averageness. Another study found that synthetic face were on average seen to be more trustworthy than real human faces.4 How familiar a face looks could lead to how much we are likely to trust it.

Miller et al. (2023) have also tested programming a model to look specifically for human perceived attributes, things like distinctiveness/averageness, memorability, familiarity, attractiveness in order to detect AI generated faces. Using some of these features that have been able to effectively and accurately detect GAN-generated faces.

Why is GAN detection a big deal?

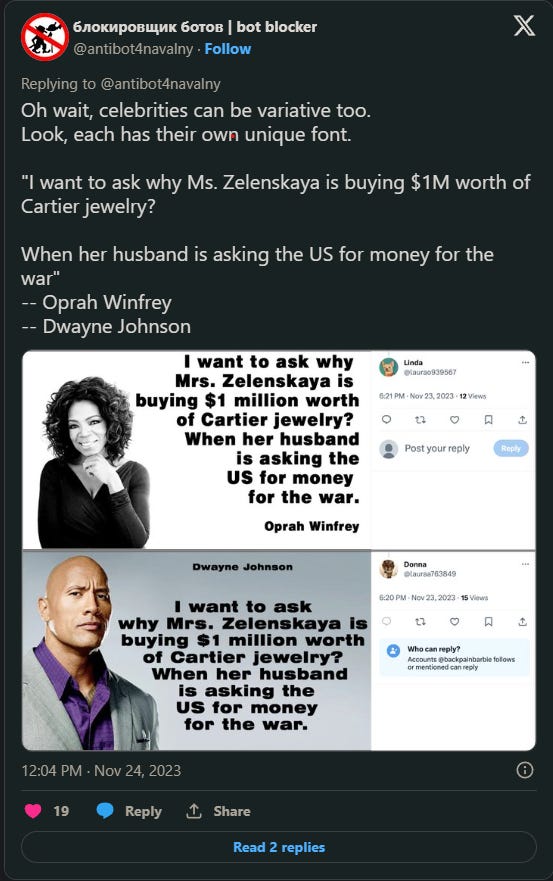

the worry about fake social media accounts is growing, especially as this is an election year in the US and most likely the UK. The fact that AI can generate thousands of profile pictures, as well as tailored content makes the lives of those pedaling misinformation much easier. The majority of the fake profiles which use GAN-generated faces, are used for "astroturfing". This is the practice of making a Tweet or an account seem like it is coming from the community or grassroots, but is actually a cover for an organisation or for political, advertising or PR purposes. This isn't necessarily a new phenomenon, however AI is making it much simpler.

The purpose of these accounts is to look real enough, they often feature real sounding names likely AI generated, as well as short information or even contact phone numbers. These accounts commonly engage in 'coordinated amplification' - posting the same message on many different accounts, or sharing the same Tweets through numerous accounts. Take for example the spate of celebrity quotes about the Ukraine war.

The political motives behind misinformation

It is widely known that misinformation and disinformation can be politically motivated, and poses a threat to democratic processes. Consider the political motivation behind the quotes above, these are coordinated campaigns by highly organised groups. Fake Twitter or Facebook accounts post the same content, but then also share and reshare content from other fake accounts.

A recent Wired article points out that loopholes have also allowed this information to spread in the form of Facebook ads. Other posts feature doctored images of celebrities' verified Instagram accounts, adding legitimacy to the claims in the posts. Worryingly there is a broader trend that social media organisations are scaling back their efforts to combat misinformation. A report from the not-for profit organisation Free Press. found that companies such as Meta, X and YouTube have rolled back policies on content moderation, as well as cutting jobs in areas such as trust and safety. This trend, along with innovations in AI technology and deepfakes, provide a worrying outlook for the future of digital information.

AI Generated Faces for Social Good

To end on a positive note, there is hope this technology can also be used for the good of society. A program called ChildGAN has been proposed which could help in identifying long-lost children.5 The program will be able to realistically recreate the aging process taking pictures of a lost child and showing what they might look like in 5, 10 or 20 years. Researchers hope this could help in reconnecting victims who were lost at a young age through child trafficking or abduction.

So how can you test if a face is AI Generated?

If you have a large enough set of images, then running a test on where the eyes are does seem viable.

If you are looking at an individual image, check for any distortions or warps in the background.

Asymmetry, might actually be a sign that a face is real.

Check the expression, does the face have a plain expression?

If the face is more distinctive and more unfamiliar it is more likely to be human. A face that is familiar and less distinctive is more likely to be AI

Look at the lighting and shadows, are there any inconsistencies?

While these are some tips which could help for now, AI images are constantly getting better and new techniques will need to be in place to detect images. Additional crtical thinking is necessary, if the face is on a Twitter profile, look at what else it is posting and why.

While the method of detecting eye placement works for some types of AI generated faces, technology is quickly evolving. Other more sophisticated generators do not show these traits. Other issues faced by GAN-generated images will also quickly be solved. This means that new ways to detect images will need to be looked at. As the technology becomes more and more capable of creating realistic images, researchers and fact checkers will be in a constant battle to develop new detection techniques.

Miller, E. J., Steward, B. A., Witkower, Z., Sutherland, C. A. M., Krumhuber, E. G., & Dawel, A. (2023). AI Hyperrealism: Why AI Faces Are Perceived as More Real Than Human Ones. Psychological Science, 34(12), 1390-1403. https://doi.org/10.1177/09567976231207095

Yang, K. C., Singh, D., & Menczer, F. (2024). Characteristics and prevalence of fake social media profiles with AI-generated faces. arXiv preprint arXiv:2401.02627.

Miller et al. (2023)

Nightingale, S. J., & Farid, H. (2022). AI-synthesized faces are indistinguishable from real faces and more trustworthy. Proceedings of the National Academy of Sciences, 119(8), e2120481119.

Chandaliya, P. K., & Nain, N. (2022). ChildGAN: Face aging and rejuvenation to find missing children. Pattern Recognition, 129, 108761.