The AI Voice Clone Marketplace: Trade Your Voice and Speak Any Language Instantly

A new platform allows users to build and trade AI clones of their own voice and dub it into 30 languages. I test the latest generative voice AI to see if AI detection tools can tell real from fake.

Ever wondered how you would sound speaking Chinese, Spanish or Bulgarian? Now you can with newest iterations in generative voice AI.

The company ElevenLabs has launched a slew of new products in the area of generative voice AI, claiming to be the "most realistic, versatile and contextually-aware AI audio platform". Their new services allow users to generate and tweak an original AI generated voice, or to clone your own voice turning it into an AI model. More than that, they this week launched the dubbing studio, a speech-to-speech automatic voice conversion which allows translation in almost 30 different languages.

Now, with a voice clone of yourself, you are able to hear how you would sound speaking Danish, Filipino or Malay. You can also instantly dub YouTube videos into any one of these languages just by copying the link.

Tailoring AI Voices

If you don’t want a clone of your own voice, you can create a new one through the generative voice AI tool. You can choose male or female, young, middle aged or old as well as accents from American, British, African, Australian or Indian. You can even choose how strong or weak want the accent to be. It promises that no two voices it generates will sound the same.

Unfortunately, the Australian accent was non-existent and it sounded like a generic American accent. I tried switching through age and gender parameters without any of them sounding remotely Australian. The other voices fared better, even if they were bordering on stereotypes.

An “Australian” Young Female Voice, generated by ElevenLabs

The program then lets you tweak the voice for stability, clarity or similarity enhancement, style and exaggeration. Tweaking provided some slight differences in style, though I wouldn't say it was overly noticeable.

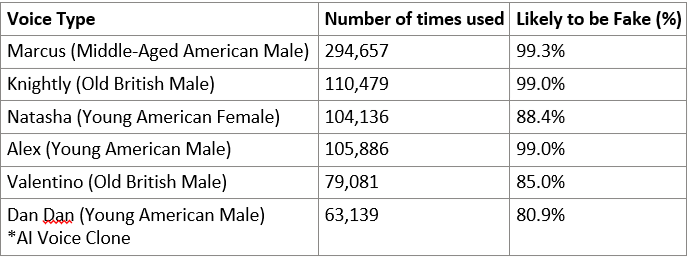

My generative skills were not that great, but other users voices loaded to the new market place have proved to be popular. The top voice, Marcus a middle aged American in the vein of Morgan Freeman has been added by almost 300,000 users.

“Marcus” - Described as authoritative and deep

The Dangers of Voice Cloning

While the quality of AI speech has undoubtedly come a long way in the past few years, it has been its fair share of concern. Technology, such as that provided by ElevenLabs became the centre of controversy last year on the back of a worrying trend in voice cloning scams.1 4Chan moved to ban members for using ElevenLabs to imitate celebrity voices, such as Emma Watson and Joe Rogan to have them say offensive and abusive things. A concern which was recognised by ElevenLabs founders on Twitter,

In 2019, a business in Europe was reportedly scammed out of hundreds of thousands of dollars after a caller used deepfaked audio of the CEO demanding a bank transfer to the scammers account. There have been multiple stories of parents receiving calls where scammers use AI voice cloning to imitate their children. The scammers claimed to have kidnapped the child, using AI voice to sound authentic, and then demand ransom.

With so much information being shared online, we might not think of the biometric data we are leaving behind, this includes our voice imprint. Even small clips from videos on social media could be used to train a new voice clone.

The Battle to Detect Voice Clones

While the quality of voice cloning is getting better, so are tools used to detect fake from real voice recordings. Tools such as Deepfake Total created by researchers at the German institute Fraunhofer AISEC, takes 30 second samples of audio and gives a percentage rating of how likely it is to be fake.

I wanted to test the new range of AI voices and professional clones, from the ElevenLabs to see how convincing they were both to my ear, and to an AI detection tool.

Testing new AI Voices against detection tools

I took as many voice samples as I could from ElevenLabs, both AI cloned voices, and AI generated voices, and ran them through Deepfake Total.

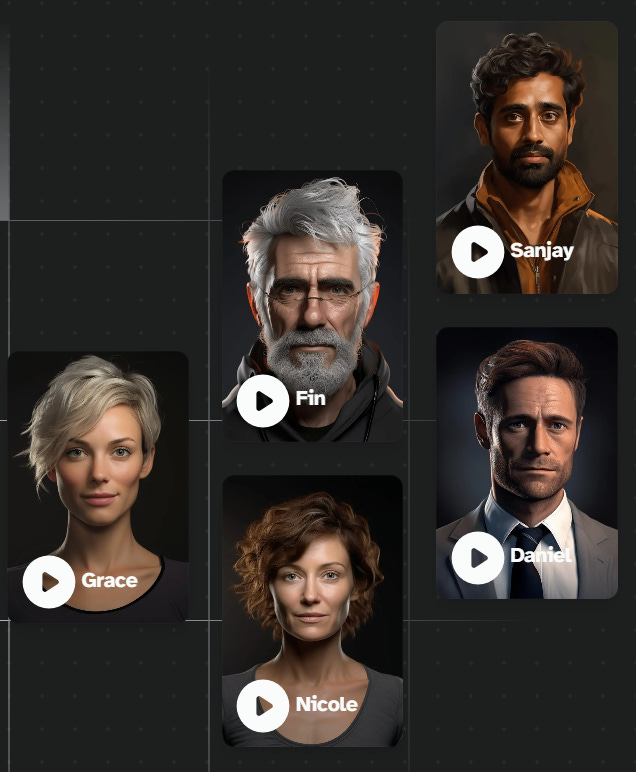

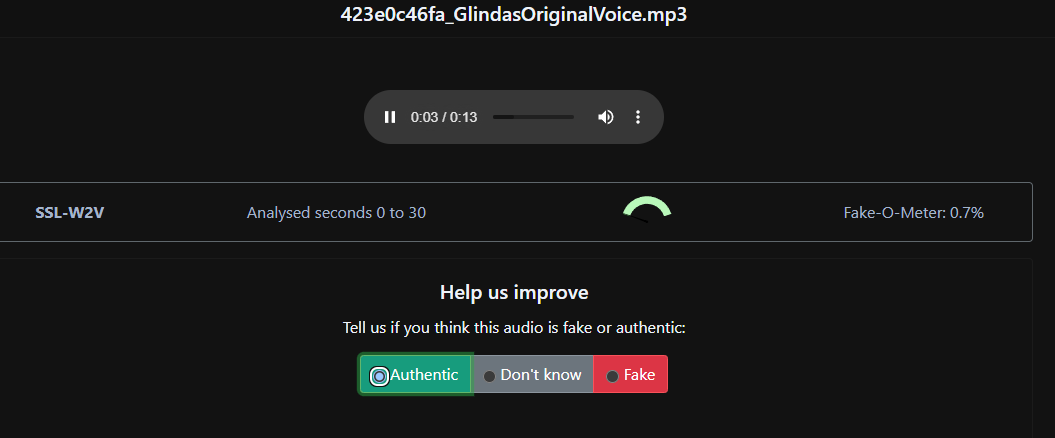

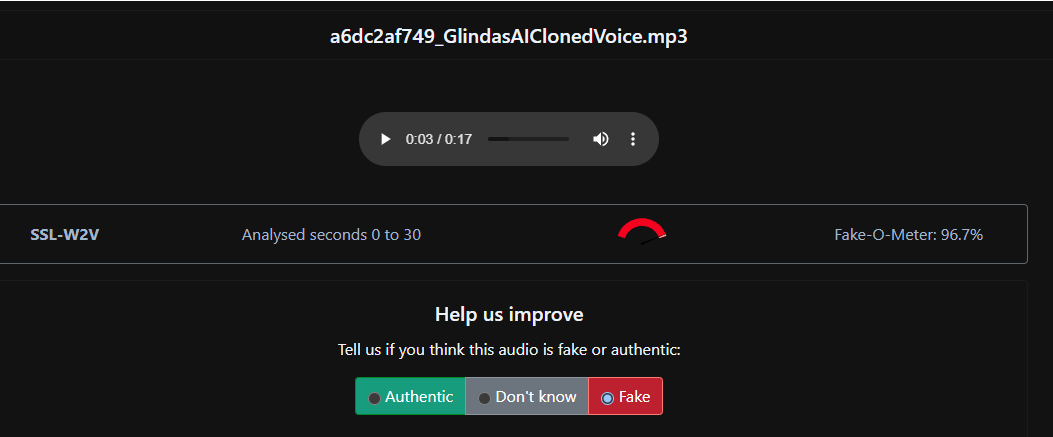

Firstly I tried the professionally cloned examples on the site's main page. Professionally cloned voice use from 30 minutes to 3 hours of recorded audio, and take around 4 weeks to complete. The first example is below:

“Glinda” Original (not AI) voice

“Glinda” AI Clone

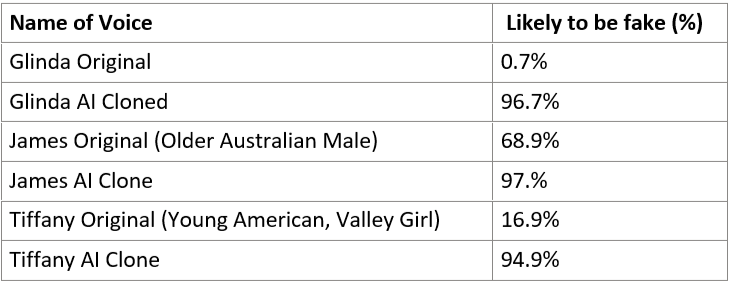

Here are the results from Deep Fake Total:

As you can see, Deepfake Total easily spotted the fake from the real voice. The results from two more comparisons that AI clones of each of these were easily detected. Interestingly, the page produced a false positive of an original voice (James an older Australian male) as being almost 70% likely to be fake.

AI Voice Cloning Can Fool the Detectors

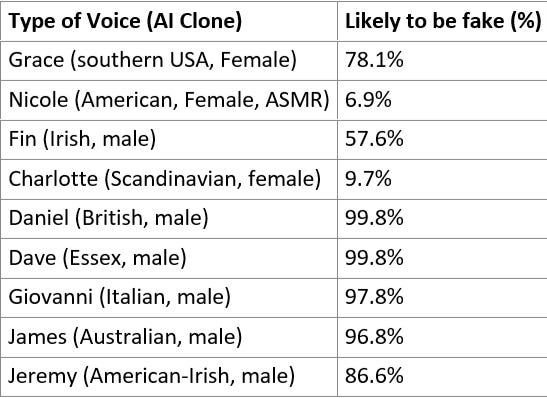

I ran a similar test with the professionally cloned AI generated examples provided on the site (all speaking English). Again, the clones have been trained on hours of recordings from a real voice, and are then processed for around 4 weeks.

Running the voice clones through Deepfake Total the program was fairly accurate at telling which voice was AI generated. I ran 12 of these professionally cloned voices and it was able to spot 10 out of 12 as being fake. Interestingly, there were two AI clones in here which were able to fool the AI detection tool.

The two cloned voices which tricked the system were voices of younger women. One was an ASMR style voice Nicole (6.9%), used for meditative guides or an intimate feel to narrative projects. The second was Charlotte (9.7%) described as English but with a Swedish melody. The samples are below:

“Nicole” A professionally cloned AI voice

“Charlotte” A professionally cloned AI voice

I then tested the top six AI generated voices in the market place on the ElevenLabs website.

All of the top voices were quite easily detected to be fake, which is a positive sign for the future of authenticity.

As a comparison, I also tested Australian voices from the company Murph.ai. While I found the accents to sound more Australian than those from ElevenLabs, the quality of voice tended to lag behind ElevenLabs, with more artefacts and stilted speech. Running the 12 samples from Murph.ai site, through deepfake-total spotted all of the fakes. One was rated at 63% likely to be fake, though were rated above 98% certain to be fake.

Dubbing AI and the Bias of Deefake Detectors

I briefly tested the dubbing capability of the platform, and the ability to technology to detect fakes in foreign languages. I first converted a speech by Donald Trump into Danish. Deepfake Total gave a fake probability of 99.4%.

I also dubbed a monologue from Sex and the City into Chinese (don't ask why) - which showed only 25% of being faked.

Bias in Deepfake Detection Tools

The effectiveness of these tools for dubbing is important, as researchers have been worried about the ability for AI detection in other languages, pointing out that detection tools work much less reliably in languages other than English.

In a new paper the researchers behind Deepfake Total point out that there is a bias in deepfake audio data used to train detection models.2 Because this data is predominantly in English, models are limited in their ability to detect deepfakes in foreign languages, potentially discriminating against non-English speakers. The new paper outlines the plans to release an open source dataset of multiple languages to help train new deepfake detection tools. As deepfakes are increasing in many languages, the creation of this data set aims to help global efforts against audio spoofing and deepfakes.

Commercial Generative Voice AI

Like it or not, you will soon be hearing more and more AI generated voices around you. Audible is already experimenting with what they call "virtual voice", where books are read by AI models. Results for searches for virtual voice already return over 10,000 results, mainly in romance and erotica. The books comes with a disclaimer at the beginning - "this book is narrated with virtual voice, computer generated narration for audio books”.

Acast, the prominent podcasting platform, has teaming with news.com.au, an audio company and an AI company to produce AI generated real-time news headlines. Interestingly, Acast's own community guidelines have a section banning inappropriate content, one of which is "AI or other automatically created content". Spotify and iHeartRadio have also been experimenting with converting some of their biggest podcasts into different languages through use of AI. The ElevenLabs YouTube chanell has their own example of a podcast, dubbed into 5 different languages.

It seems evident that we are moving toward a future in which AI generated voices will be reading us headlines or books before we go to bed.

What Use is an AI Voice Clone Anyway?

One question which I was considering is, what is the real use case of creating AI cloned voices, beyond crime and deception. ElevenLabs’ terms of service prohibit using a clone without someone’s consent, except in a few circumstances. They point out a number of use cases on their site, under their safety conditions. Here are some things they suggest (note, I do not pretend to know the law or have the ability to check these claims):

Using for private study, for example having a paper for school read to you in any voice you like.

For education, a lecturer can generate a clone of any public figure to read a poem or reading to the class, they do not need permission from the poet or publisher.

For parody or satire, anyone can generate a voice clone of Donald Trump, without permission as long as it is clearly for humor and is cited as being AI generated.

For content criticism, a film reviewer, could create a voice clone of the lead actor in a film to narrate the review! As long as the review isn’t defamatory and uses publicly available content.

Using for a voice for a quotation, might be ok, but it is not clear what the law is if you use someone else’s voice without their permission.

For political speech, you could use a voice clone of someone without their permission is it contributes to debates of public interest. A journalist could use text from a report or book and have it spoken by a voice clone of the author.

Concluding thoughts

Generative AI voice clones have come a long way and gone are the days of robotic sounding text-to-speech tools. The new wave of voices which are trained on human speech patterns will sound more realistic and more lifelike. We will soon hear their use in podcasts, web newscasts, advertisements, as well as for deception, scamming and fraud. While tools which are able to detect these deepfakes are currently effective, new generative models are providing challenges and some are even able to trick the detectors.

Note: The test I performed in this article are by no means scientific, there were many variables which I did not account for. The aim was to give a very simple demonstration of both the AI tools and the detection tools. Maybe someday I will make it a bit more of a rigorous study. I also have no stake in any of the companies mentioned.

See the excellent report by Recorded Future “I Have No Mouth, and I Must Do Crime” (2023) https://www.recordedfuture.com/i-have-no-mouth-and-i-must-do-crime

Müller, N. M., Kawa, P., Choong, W. H., Casanova, E., Gölge, E., Müller, T., ... & Böttinger, K. (2024). MLAAD: The Multi-Language Audio Anti-Spoofing Dataset. arXiv preprint arXiv:2401.09512.