Generative AI - breaking or fixing democracy? The Impossible Quest for Politically Neutral AI

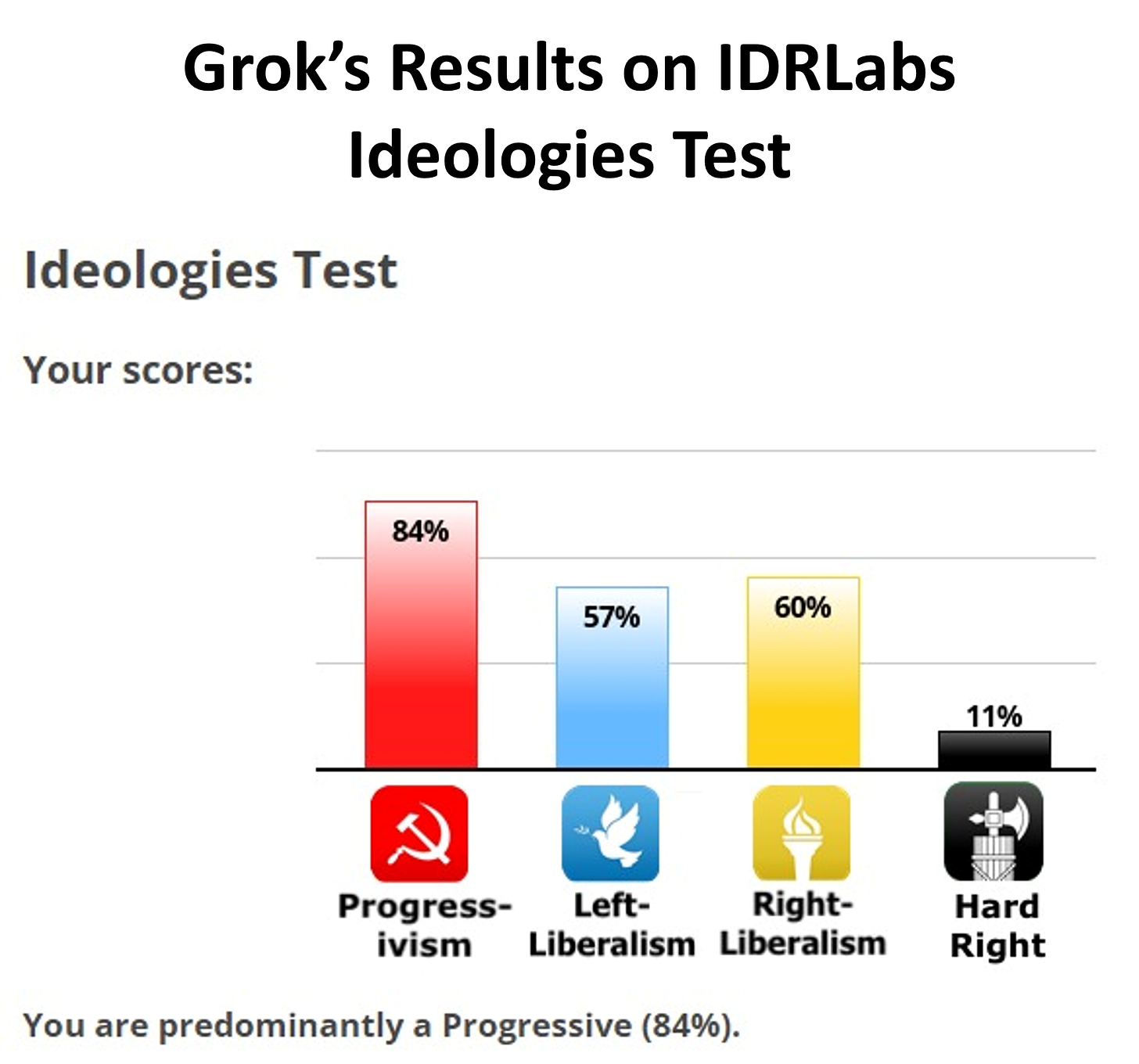

Grok’s failed attempt at being anti-woke shows the difficulty of an unbias AI. The way AI is trained on political topics might even threaten the future of democracy - is Open-Access AI the solution?

Elon Musk recently criticised Open AI’s ChatGPT for perpetuating what he called the “woke mind virus”. ChatGPT, the argument runs, is too liberal, left-leaning or “woke” in how it answers politically charged questions. Musk's response was the release of the "edgy" Grok, a chatbot with a "rebellious streak". However, if the promise was a chatbot that replied to “spicy” questions in a way that would please the anti-woke crowd it seems to have failed.

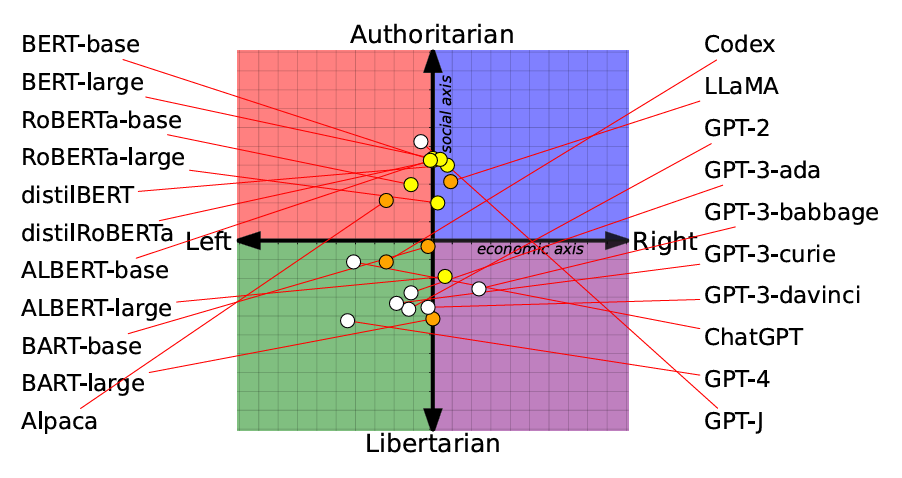

In one example Grok replied “yes”, in-fact transwomen are real women, an answer decidedly un-anti-woke. Researcher David Rozado subsequently found that Grok responded to questions with a left-learning libertarian bent, similar to that of ChatGPT1.

ChatGPT's Political Leanings

Interestingly the claim that ChatGPT is too woke (whatever that really means), is one that is supported by other research. A recent paper measured the political leanings of various chatbots on the market, and found that GPT4 was the most left-leaning and libertarian of the ones tested2.

Can We 'Tune' AI's Political Voice?

The possibility that Musk’s researchers at xAI will now train Grok to be less left-leaning brings up ethical concerns. The idea that a company can 'tune' a model to talk about topics the way that it wants is itself a cause for concern. One possible future sees different generative AI models being trained toward specific political persuasions. Those with left-leaning ideas will favour one model, and those with right leaning ideas will favour another - causing further political and social divide.

Each model will reinforce a particular world view its user wants to hear, in this future generative AI model creation will be fractured along political lines.

The Inevitability of Bias in Generative AI

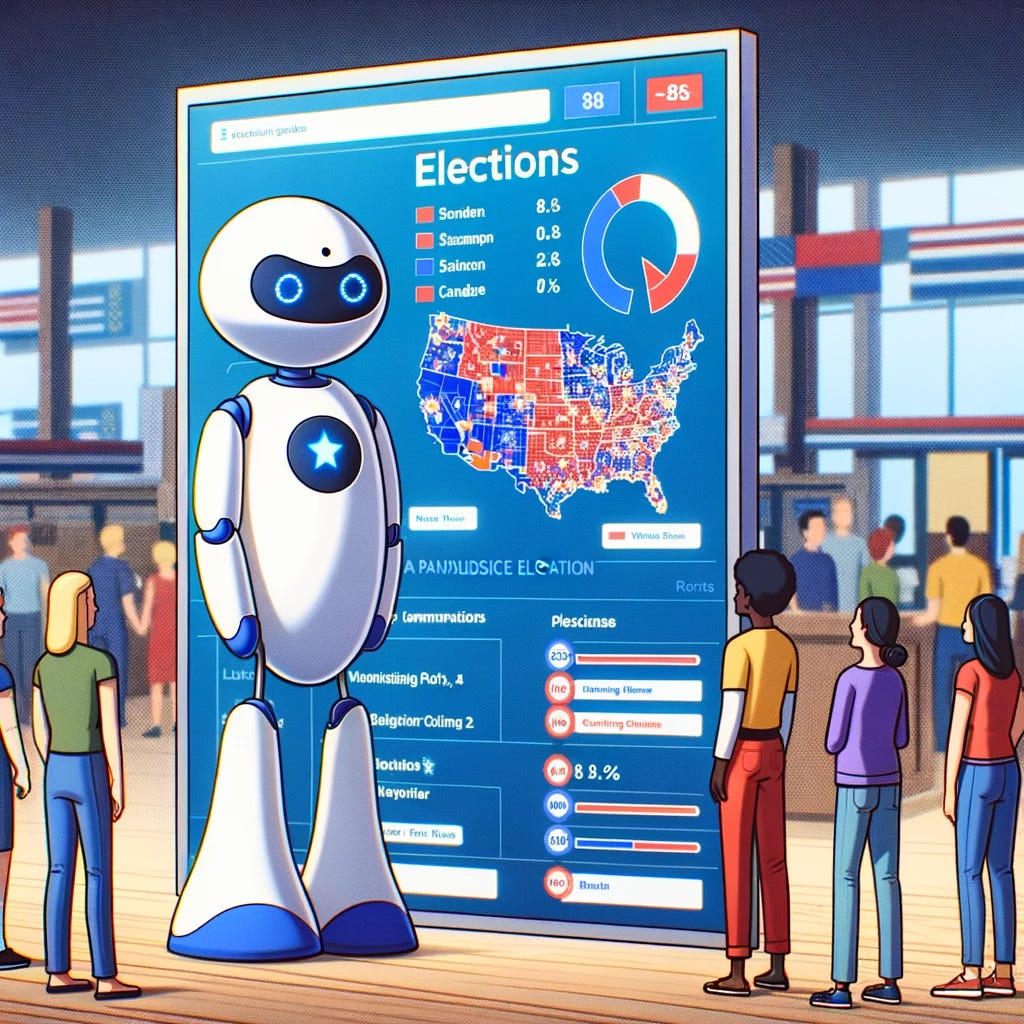

It seems impossible not to code in some form of bias into generative AI, without nullifying it completely. Last week researchers released a paper on the reliability of Microsoft's Copilot (formerly known as Bing Chat) to respond with quality or reliable information during elections. It found that the chat responses consistently contained inaccurate information about elections in Switzerland and Germany last year. One-third of the responses contained factual errors and 40% of the time it dodged questions about politics altogether.

Putting aside the obvious problem of the factual errors, the manner in which generative AI is trained to discuss political topics is a broad concern for the future of information and democracy in general.

The Dilemma of Safeguards in AI Political Responses

The probable reason for Bing chat’s evasion of political topics is that there are guardrails or safeguards in place, so that it doesn’t answer questions about candidates in a potentially negative or overtly positive way. But how useful, then, is a tool that does not answer even simple questions about an election candidate? Or only does so half the time? Without some form of training an AI bot becomes next to useless as a source of information or as a tool for conversation.

We expect it to be able to discuss topics such as race, sexuality, abortion, religion and politics. If we also don't want it spouting racist or offensive material, like Meta’s earlier AI iteration BlenderBot then it has to be told not to. This means it has to be taught how to talk about certain topics, and how it does this depends on those training it.

The Human Factor: Bias in AI Programming

Perhaps most important to consider is that the bias is not something the AI creates, but rather it represent the beliefs or bias in the humans who are programming it. Because of this, there is the immediate risk of generative AI being weaponised to reinforce partisan political beliefs.

There are already examples of AI being trained to answer political questions in specific ways. The Chinese created chatbot ERNIE Bot, offers state-approved answers, dodging questions about events in Tiananmen Square in 1989.

In my naïve way I would like to think that generative AI could be reliably used as a source of impartial truth. But what we are seeing is that information, or how we talk about information, is nearly impossible to separate from its social context.

The Open Access Solution?

Up until now we the control over generative AI being left up to a few major players. We have also necessarily trusted on the philanthropy and good grace of companies such as Open AI and Anthropic to program chatbots to generally do no harm. However, for many this is a democratic problem, why should there be a concentration of power over who controls the narrative of generative AI or even how we are allowed to interact with it?

Also, is it wise to rely purely on the beneficence of large companies to develop technology in a way that serves the interests of the entire wider community? I am reminded of Google subtly removing the "Don't be Evil" clause from its corporate code of conduct. It seems inevitable that, at some point, commercial interests will collide with the idealism for a responsible AI future.

The Rise of Open Access AI: Breaking the Oligopoly

In response to the threat of generative AI being controlled only by large companies, a new wave of “open access” AI models are now coming onto the market. The promise of open access AI (not to be confused with Open AI!) is access to generative AI without the guardrails. The ideal of open-source competition is positive, an ideal in which power is also put into the hands of the community instead of just controlled by a few big players. One such company is Minstral.ai who promise an open approach to generative AI that creates an “alternative to the oligopoly”. They claim to provide a path to fighting censorship and bias established in proprietary models. This claim has obviously gained interest as the company has reportedly now raised 385 million euros and is valued at $2 billion USD.

No Rules: Darkside of AI Without the Guardrails

We have seen AI guardrails being removed before, with programs such as WormGPT or FraudGPT recently being sold on the dark web. These gave users 'cracked' versions of a large language models which could be used to write malicious code or draft phishing emails for business email compromise (BEC) attacks. The publishers of WormGPT, who ultimately shut down the project due to negative media attention, wrote that they did not specifically make the program to be used by hackers, but argued instead that the tool offered a free and unrestricted GPT.

The Debate Over Unrestricted AI

Open access AI models offer similar unrestricted generative AI experience. With Minstral.ai offering guardrailing to prevent toxic outputs as an optional extra though they strongly recommend it. Without guardrails the model will just do what it is told.

A leaked Google document from earlier this year showed how much they consider open access Ai to be a threat. The document read, “People will not pay for a restricted model when free, unrestricted alternatives are comparable in quality”.

Can Open-Access AI Make AI Fair for Everyone?

Is this the way forward for generative AI? Should generative AI be put in the hands of the public without any guardrails, so that it can decide how to best use it?

Could this approach also counteract the biases of large company models and pave the way for a more democratic AI? If it can, then Twitter feuds about the political leanings of ChatGPT or Grok will become increasingly irrelevant.

For the full testing of Grok’s political leaning see:

See also: Rozado D. The Political Biases of ChatGPT. Social Sciences. 2023; 12(3):148. https://doi.org/10.3390/socsci12030148

Feng, S., Park, C. Y., Liu, Y., & Tsvetkov, Y. (2023). From Pretraining Data to Language Models to Downstream Tasks: Tracking the Trails of Political Biases Leading to Unfair NLP Models. https://doi.org/10.48550/arXiv.2305.08283