Ethics shopping and digital sweatshops - is the "AI Ethics Boom" just for show?

Without regulation companies can mix and match ethical guidelines, frameworks, codes and principles to suit their practice - we look at 5 ways to skirt ethical responsibilities.

In a recent keynote address the Australian Securities and Investments Commission (ASIC) Chair Joe Longo said that that current regulation around AI may not be sufficient to prevent harm or unfair practices. However, he also made the point that AI is NOT the wild west, meaning that Australian companies can still be held responsible under some current regulations. He cited the recent case against the insurer IAG which alleges that the company used an algorithm that raised the cost of premiums of customers predicted to stay with the company, while giving lower prices to those predicted as likely to switch to other insurers. This came after the company was fined $40 million for another algorithm which avoided giving advertised discounts to customers who should have been eligible.

Both of these cases the company is being held liable for the unchecked actions of an AI algorithm. However, it is not clear how changing AI technologies are covered by regulation, with regulation being slow to come in. Longo stated:

"there's a need for transparency and oversight to prevent unfair practices – accidental or intended. But can our current regulatory framework ensure that happens? I’m not so sure." (ASIC) Chair Joe Longo

The EU is the closest to AI regulation, with the passing of the EU AI Act, which is soon to be formally adopted into law. Among other things this act agrees on safeguards around general purpose AI, limits the use of bio-metrics by police, and bans social scoring and AI to manipulate user vulnerabilities.

Elsewhere, in the absence of regulation, companies have been encouraged to take on their own form of self-governance related to AI. In Australia, the most prominent are the Government’s 8 Artificial Intelligence (AI) Ethics Principles, a voluntary framework intended to be “aspirational”.

But relying on voluntary frameworks and self-governance based on principles doesn’t always translate easily into practice. I want to highlight a paper from 2019 by Luciano Floridi, who writes that even the best efforts of those writing ethical principles may be undermined by some unethical risks1. He points out 5 areas in which companies can skirt around their ethical responsibilities, while at times, portraying themselves as still doing the “right thing”. He calls these (1) ethics shopping; (2) ethics bluewashing; (3) ethics lobbying; (4) ethics dumping; and (5) ethics shirking.

Ethics Shopping

Firstly, Floridi’s paper came out in 2019, at the time there was a report of 70 recommendations published in the preceding 2 years around AI ethics. While this seems a lot, a recent world wide ethics review released in late 2023, reviewed over 200 distinct Ai guidelines from public bodies, academic institutions, private companies, and civil society organisations worldwide. With so many reports and recommendations, the “AI ethics boom” has in a way diluted ethical discourse.

The term ethics shopping points to a "market of principles or values", where organisations shop around for an ethics style which matches what they already do, rather than having the ethics drive their action. Floridi defined as the malpractice of mixing and matching ethical principles, guidelines, codes, frameworks or whatever else companies find to justify their current practice.

Along with this, is the issue of ambiguity in language within these codes or frameworks. If language is vague, can we be confident that all companies are interpreting the guidelines in the same way?

To take an example, the first Australian AI principle states: “Throughout their lifecycle, AI systems should benefit individuals, society and the environment.” However, this can be open for interpretation: who is to say what “benefit” really means? And what if there is conflict between benefit to individuals and society?

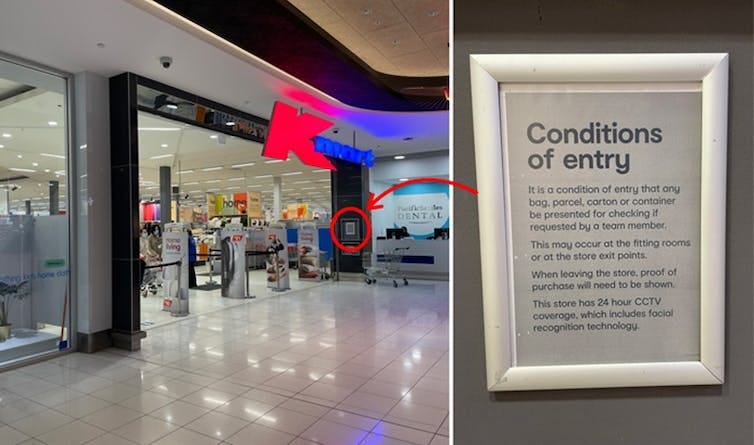

As an example, Kmart and Bunnings trialed the use of facial recognition technology in their stores, arguing it would protect their staff and stores from people who have been previously banned. The Information Commissioner ordered an investigation into whether this violated individual rights to privacy of other customers. This was meant to be finished in 2023, but there has still been no official word on the case.

In a sense, Kmart and Bunnings argued that this use of AI is for the benefit of individuals and society, to protect their staff and keep their stores safe for customers. The other side of the argument being that this kind of AI use violates people’s privacy and is NOT of benefit. In this case, the Information Commissioner will rule on the legality of the practice. Without further regulation, there will be other cases where ethics shopping will justify other forms of business practice. The EU's implementation of laws related to the use of AI is a step forward in setting clear and enforceable boundaries for ethical practice in AI.

Ethics Bluewashing

We can compare this to the practice of "greenwashing" (or "sportswashing") where companies appear to be greener or more sustainable or friendly to the environment without actually being so. Changing packaging to feature green imagery for example. Blue washing is the digital version. This is making unsubstantiated or misleading claims about ethical values to appear more ethical than a company actually is.

This is a form of misinformation, which distracts the target audience from anything actually going wrong, masks behaviour without changing it, aims at economic savings and gains some advantage of good will. Combine this with ethics shopping, and companies can highlight a cherry picked principle which fits their current practise, and then publicise it widely to look ethical - but without making any real change or improvements.

Consider the number of ethical and responsible AI guidelines which are being signed onto now. Last week 8 Tech companies, including Microsoft and Mastercard, pledged to build "more ethical" AI abiding by the UNESCO Recommendation on the Ethics of Artificial Intelligence 2021. In July and September 2023 15 of the biggest AI companies, including Google, Microsoft, OpenAI and Meta signed the White House's voluntary commitments to manage risks posed by AI.

This is not to say that these companies are not upholding their pledges, but signing onto these type of commitments should not automatically be seen to be truly reflected in changes in practice. As we will read below in the case of ethics dumping, Scale AI which signed the White House’s commitment also is accused of running “digital sweatshops” with underpaid staff and withheld wages.

Digital Ethics Lobbying

It is undoubtable that companies have input into the future of AI, however some have warned not to let industry write the rules for AI. Benkler (2019) argues they "cannot retain the power they have gained to frame research on how their systems impact society or on how we evaluate the effect morally."2

This bring us to the point of digital ethics lobbying. Floridi defines the practice as malpractice of exploiting digital ethics to influence, delay or avoid good or necessary legislation about the design development and deployment of digital products or solutions. Companies try to shape the AI narrative to suit their goals, while claiming it is in the name of ethical practice. Consider the motivation of someone like Elon Musk signing a stop to AI development letter under the guise of ethics - a pause which would have helped his company catch up in the AI race.

In September 2023 the Australian industry advocates, Digital Industry Group Inc. (DIGI), which represents some of the world's largest tech firms, called on the government to go slow on AI regulation. The non-profit set up by founding members Apple, eBay, Google, Linktree, Meta, Snap, Spotify, TikTok, X (f.k.a Twitter), and Yahoo, responded to a discussion paper on "Safe and responsible AI in Australia". In their letter DIGI supported their case, using ethical language saying it "supports development of AI, and its applications, underpinned by strong safety principles and a conscious assessment of social and economic benefits." Before going on to advise against new legislation aimed at regulating AI as a technology, (advocating instead for building upon existing legislation). The language of ethics is used in a self-serving way, and presented as an alternative to legislation.

Ethics Dumping

Ethics Dumping is the export of unethical research practices to countries which have weaker or more lax legal and ethical frameworks. Nature outlines that ethics dumping (or ‘helicopter research’) occurs when researchers from high-income countries conduct studies in lower-income settings. This can be in any field of research, from health-related or biomedicine contexts, but can also apply in a digital contexts. For example, a robotics or AI company can conduct research in another country which would be illegal or against regulation in their own country. Moreover, they can the import the outcomes of that research for their future use.

If regulations were in place around the development of AI tools in Australia, for example, could companies skirt these rules via ethics dumping? A company could train an algorithm on biometric data (e.g. facial recognition) or personal data in another country without laws or regulation on the practice. They could then import that trained model back for use in their products for sale in their home country. At the moment there does not seem to be any clear rules in place around a practice such as this, even if there were regulations in place in Australia - would that translate to a model trained overseas and sold here?

Ethics Shirking

Ethics shirking is the malpractice of doing less "ethical work" where there is a lower rate of return as perceived by the company. Similar to ethics dumping, a company might engage in unethical practices in places with already disadvantaged populations, or places with weaker institutions, corrupted regimes, unfair power distributions or other types of societal problems or issues. This is because the company knows the ramifications for unethical practice will be much less than if it were done in a more developed country. Companies might also feel they have less ethical responsibility if they have less equity or interest in something. A company which deals with smaller amounts of sensitive information than another, might also think their ethical responsibility is less.

Ethics shirking, like ethics dumping applies a double standard of ethics, where a practice which is unethical in one market, is used in another. Large Language models for example need human reinforcement, this has been outsourced to developing countries where “digital sweatshops” have sprung up. Reportedly over 2 million workers in the Philippines perform “crowdwork”, being paid cents to label images, and edit chunks of text to train AI and LLMs for companies such as Scale AI. (As mentioned above Scale AI were also one of the signatories to the White House's voluntary commitments to manage risks posed by AI.

The Business and Human Rights Research Centre claim that Scale AI employ of 10,000 workers in the Philippines and has “paid workers at extremely low rates, routinely delayed or withheld payments and provided few channels for workers to seek recourse”. Similar to the reports of ethics dumping above, OpenAI has reportedly Used Kenyan Workers on Less Than $2 Per Hour to Make ChatGPT Less Toxic. Truly responsible AI would take into consideration both the ethics around use and training of AI models.3

How does this help us look at AI ethics in Australia? Currently, companies and organisations are hanging on in lieu of enforceable regulation. As such they fall back on voluntary principles of practice and ethical guidelines. As we have seen these guidelines only hold so much force and, through ethics shopping or bluewashing, can be quite easily skirted around by companies who want to appear as taking ethics seriously.

Floridi, L. (2019). Translating principles into practices of digital ethics: Five risks of being unethical. Philosophy & Technology, 32(2), 185-193. https://doi.org/10.1007/s13347-019-00354-x

Benkler, Y. (2019). Don't let industry write the rules for AI. Nature, 569(7754), 161-162.

Similar findings have been made in Latin America, see Miceli, M., & Posada, J. (2022). The Data-Production Dispositif. Proceedings of the ACM on Human-Computer Interaction, 6(CSCW2), 1-37. https://doi.org/10.1145/3555561