Are your Facebook photos feeding the new wave of AI?

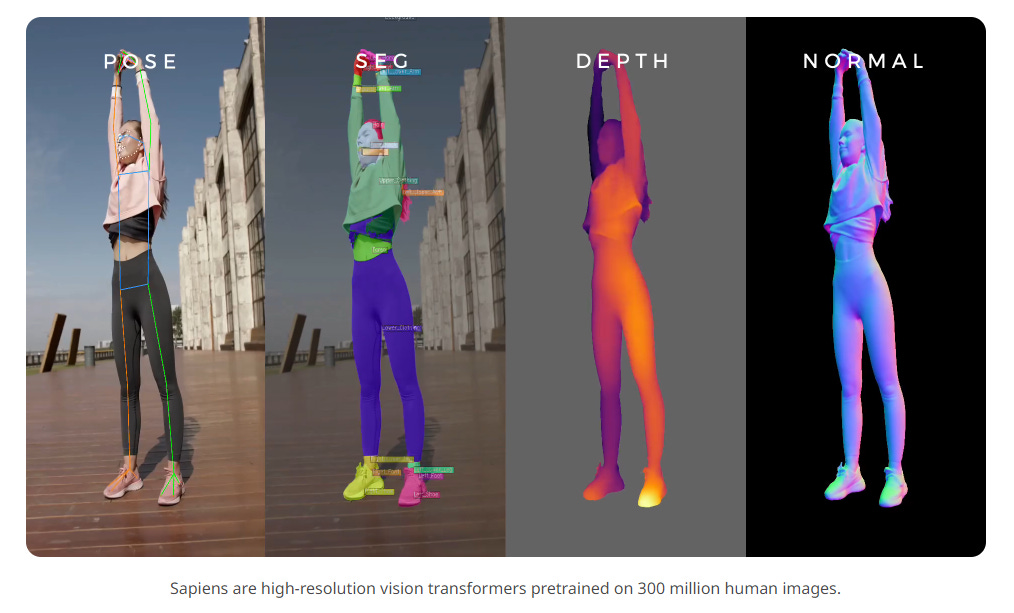

Meta's newest vision model "Sapiens" is the most advanced yet - trained on the back of 300 million "in-the-wild" human images. Is this progress or the latest step in surveillance capitalism?

Last week Meta released a family of AI models for human vision tasks, called Sapiens. The model can effectively segment body parts and track their movement in real time. Think of a supercharged motion capture which can recreate minute bodily movements or facial expressions. While this could prove a milestone for computer vision, I am interested in what these models have been built upon.

For the model the researchers utilised "a large proprietary dataset for pretraining of approximately 1 billion in-the-wild images". This was then focused down to 300 million human images (the resulting dataset being called Humans-300M Dataset). What isn't stated is where these initial 1 billion "in-the-wild” come from. Where could a company which owns Facebook and Instagram get 300 million images of humans to train an AI model?1 I will note I have no proof of where these images have come from, but there is a distinct lack of transparency in this paper.

Perhaps it shouldn't be shocking that Meta could have use public images to train their AI model. In their terms of service they say that they can use any public information, images or posts to do so. Meaning any photo or post which you have not set to ‘private’. However, there is an uneasiness at the frequency at which user's pictures or posts are being used to train AI models, probably without user’s real knowledge that it is happening. While there are rightly concerns and public backlash against Open AI for using artists work without their consent, less is said about companies using our private experiences to train similar models.

Perhaps it also shouldn’t be surprising as user data has now long been used as AI training data. Generative AI is just the latest step in the Surveillance Capitalism.

New Frontiers of Surveillance Capitalism

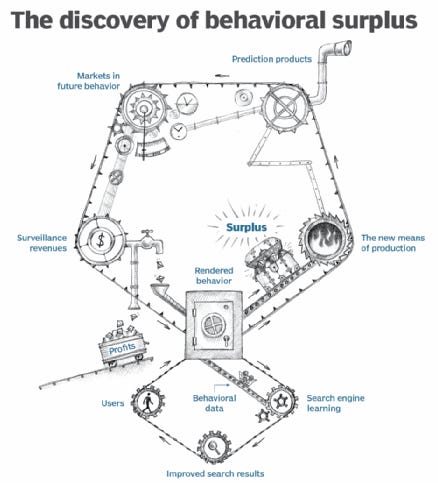

Shoshana Zuboff in her book The Age of Surveillance Capitalism, has roots in the rise of tech giants such as Google and Facebook. Zuboff’s insight is that organisations take human private experiences and turn them into money-making algorithms. For example, on Zuboff's account, user data typed into search bars like Google's was originally used to improve search results. However, this wasn't very profitable. What came next was the emergence of what Zuboff calls "behavioural surplus". Think of all the extra stuff that goes along with your Google search, like GPS locations, what time of day did you search, where from, what type of device, did you misspell something, what else were you searching for.

This data is used to create algorithms to identify patterns and correlations (e.g. users of this demographic, at this time, are more likely to search for “donuts”). This excess data and algorithms go into creating "prediction products", and enable Google and Facebook to sell highly targeted ads or information to data brokers.

You might have heard the phrase, “if you are not the customer you are the product”. Surveillance capitalism goes further by arguing that you are not even the product, you are the raw material that goes into making the real product, which is prediction. Zuboff argues that Google and Facebook in particular, have grown incredibly wealthy creating and selling products created through extracting data from users’ private experiences.

Google and Facebook have conquered the ad space, recent numbers showed that between them Google and Facebook share over half the world's online ad revenue (Google 39% and Facebook 18%). In comparison, TikTok has 3% and LinkedIn only 0.9%. These massive profits, according to the idea of surveillance capitalism, have come on the back of monetising and commodifying our personal experiences.

Generative AI is being fed by users

Now, the new frontier of surveillance capitalism seems to be the creation of generative AI models, using publicly available data which users give away for free everyday. Meta AI chatbot is refined using human input. X has said it is using public tweets (or are they “Xs” now?) to train its chatbots. Amazon is using real conversations from Alexa to train it's newest Alexa AI models. Even if users know this is happening, once data has been used to train these models, there is no way to later withdraw consent. There are questions about whether it is more fair to have an opt-in system, rather than opt-out.

There are some thing you can do to mitigate what information Facebook uses. They claim to only use public posts and comments to train generative AI models. So you can set your posts to 'private'. However, any chat you have with Meta AI can be used to train and fine-tune their models.

The way this is heading is toward a consolidation of power in the AI space, those who have access to the largest cleanest data sets will be able to train the most effective models. Who else but the largest corporations will be able to do this?

Note that previous data sets from Meta have given providence attributing data sets to third-party photo companies., see for example: https://ai.meta.com/datasets/segment-anything/. I am waiting to see if they provide the source of the Human-300M Dataset, I am happy to be corrected on their source. I did try to reach out to researchers on both LinkedIn and Twitter with no reply.